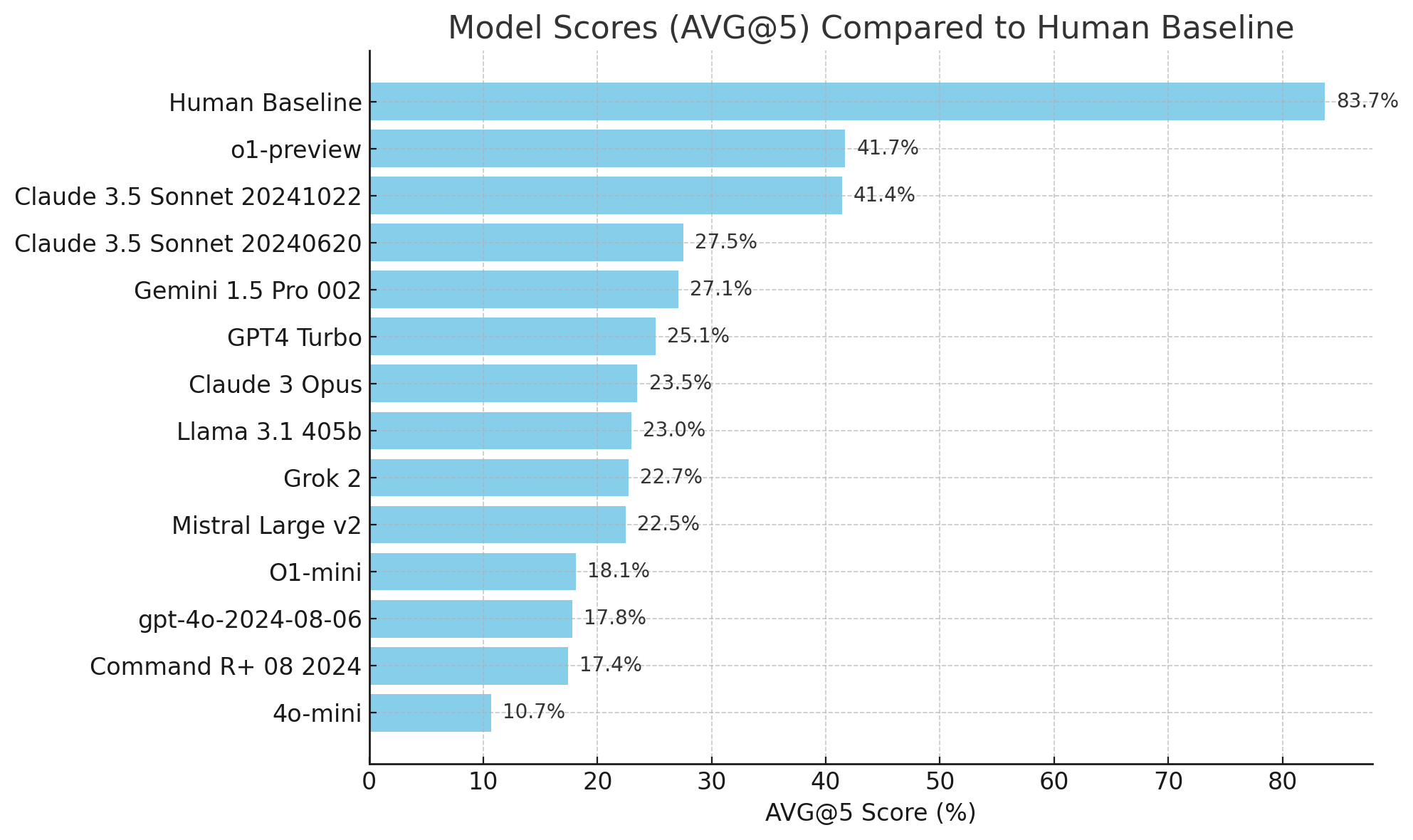

We introduce SimpleBench, a multiple-choice text benchmark for LLMs where individuals

with

unspecialized (high school) knowledge outperform SOTA models. SimpleBench includes over 200

questions covering spatio-temporal reasoning, social intelligence, and what we call

linguistic adversarial robustness (or trick questions). For the vast majority of text-based

benchmarks LLMs outperform a non-specialized human, and increasingly, exceed expert human

performance. However, on SimpleBench, a non-specialized human baseline is 83.7%, based on

our small sample of nine participants, outperforming all 13 tested LLMs, including

o1-preview, which scored 41.7%. While we expect model performance to improve over time, the

results of SimpleBench confirm that the memorized knowledge, and approximate reasoning

retrieval, utilized by frontier LLMs is not always enough to answer basic questions just

yet.

Use all of these models on the Simple Bench app - LMcouncil.ai

| Rank | Model | Score (AVG@5) | Organization |

|---|

To assess LLMs fairly, we standardized prompts across all models, directing them to choose the most realistic answer step-by-step (COT). Additionally, we tested a benchmark specific engineered prompt for select models. Prompt engineering showed slight improvements suggesting that while tailored prompts can aid performance, fundamental limitations remain. In the full report, we also hypothesize that the surprising underperformance of GPT4o stems from optimizing for specific industrial applications (math and coding) at the expense of holistic reasoning.

For a deeper dive into our results and our methods, check out the full technical report here.